Real-world instruction

An untrained human gives a natural request in a real environment.

A ROBOTIC BRAIN THAT THINKS LIKE A HUMAN

CyberBrain is building a reasoning-first robotic brain framework that runs end-to-end on real hardware — enabling robots to understand natural human instructions, reason about context, and act in unscripted real environments.

No teleoperator. No scripted skill set. No task-specific pre-training.

Human instruction

“Natural human instruction”

CyberBrain reasoning

Robot action

Physical action

Memory

Experience updates context

THE WORLD WE WANT TO BUILD

AI agents can research, write, code, and reason — but they live inside a screen. CyberBrain brings that kind of cognitive partner into the physical world.

Company Intro

A short introduction to the reasoning-driven cortex behind our robotics platform.

A CONVERSATION, NOT A SCRIPT

An untrained user speaks naturally. The robot understands, looks around, picks up what is needed, and hands it across — with no teleoperator, no task-specific pre-training, and no scripted demo.

Human says

“Take this empty bottle and throw it away.”

CyberBrain Cortex

instruction becomes embodied reasoning

Identify the bottle

Understand it is trash

Plan a safe grasp

Move to the bin

Throw it away

Update memory

PUBLIC DEMO

The public demo shows natural language, a real environment, no teleoperator, no task-specific pre-training, and no scripted skill set.

TASK BREAKDOWN

A natural request in a real environment.

An untrained human gives a natural request in a real environment.

CyberBrain identifies objects, context, and constraints.

The brain reasons about what the human means and what the body should do.

The body moves, grasps, hands over, or assists in the real world.

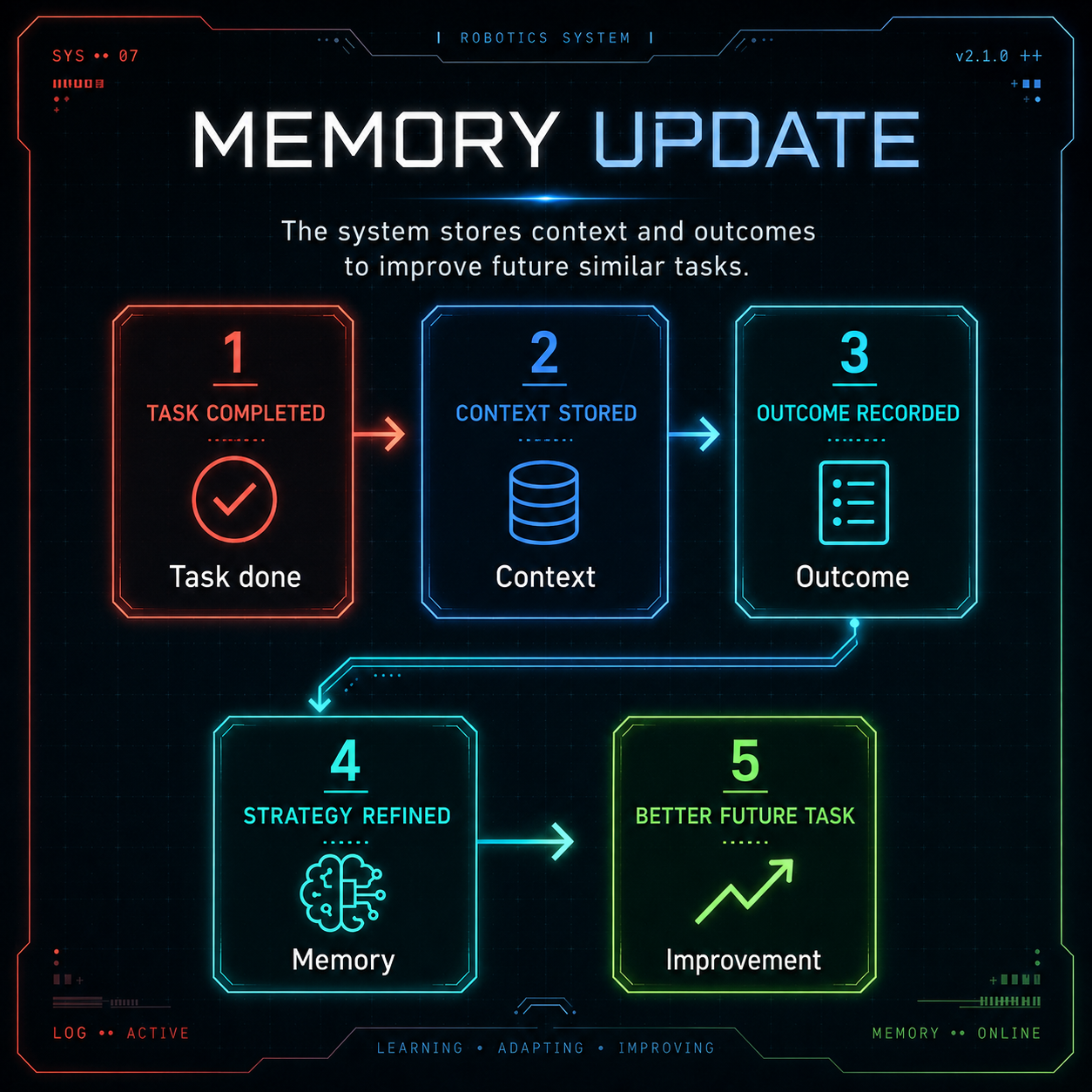

The system stores context and outcomes to improve future similar tasks.

BRAIN FRAMEWORK

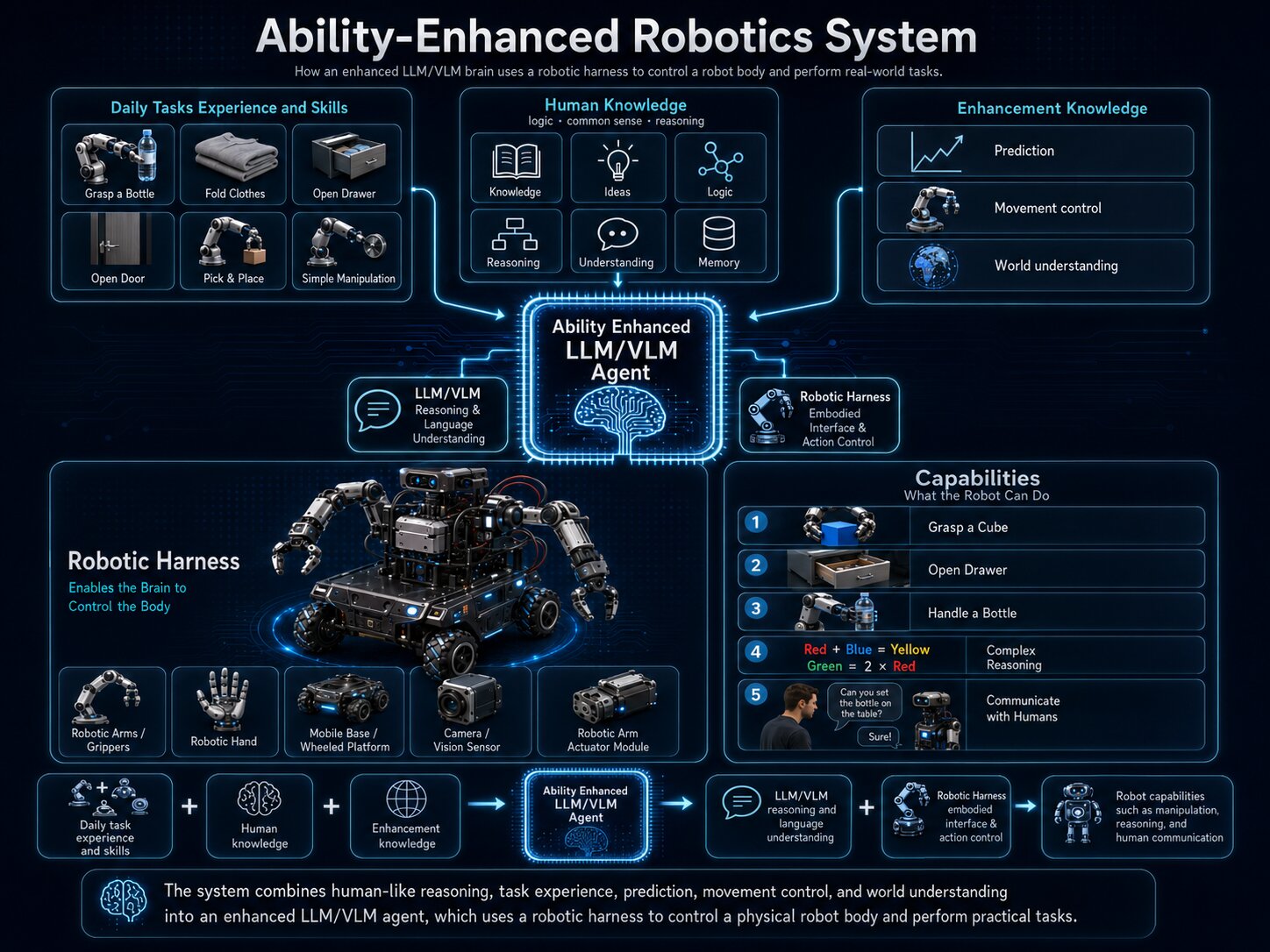

Reasoning agents already act like skilled humans inside software. CyberBrain engineers the framework that lets that cognitive system understand the physical world and control a robot.

The brain is not just off-the-shelf.

CyberBrain fine-tunes reasoning for spatial prediction, uncertainty, and grounded common sense.

CyberBrain is a brain framework, not just a model.

PLATFORM

A single instance of the CyberBrain framework can handle physical tasks and social interactions without per-task training.

Daily tasks become natural-language goals.

The same brain reasons about grasping, handover, object selection, constraints, and communication.

CyberBrain focuses on the missing cognitive layer above motor skills: reasoning, dialogue, adaptation, and orchestration across bodies.

COMPOUNDING CAPABILITY

Capability grows along two axes: better reasoning models and more real-world experience from completed tasks.

Each completed task can contribute context, outcomes, reasoning traces, and reusable strategies. Over time, CyberBrain builds a broader memory and skill library for similar future tasks.

Today

Next

Later

ASYNCHRONOUS CONTROL

CyberBrain separates high-level reasoning from real-time body control. The body keeps moving smoothly while the brain interprets the scene, plans the next action, and updates memory.

Brain loop

deliberate

Body loop

continuous

SLAK architecture

Sensing, Logic, Action, and Knowledge separate what the system perceives, reasons about, does, and remembers.

CyberBrain

Cortex

Multimodal perception

Robotics-tuned reasoning model

Continuous motion control

Context, memory, and skills

When a new frontier reasoning model ships, we can improve Logic. When a new sensor comes online, it plugs into Sensing. The system compounds capability instead of redoing training runs.

TEAM

A 10-person team with deep experience across robotics, multimodal AI, embedded systems, and world models - built to turn robot reasoning into a shipped product.

10

person team

26

average age

Top labs

AI & robotics

30+

top-venue papers

Founder & CEO

Conducting robotic competition since 2008

Build a robot that communicates and interacts with people the way another person would.

PhD EE

4x RoboCup Junior World Champion: 2012, 2013, 2014, 2016

20+ national robotics competition awards

Nearly two decades of real-robot intuition

Co-Founder

M.S. Harvard, MIT Media Lab.

World-leading expert in multi-modality models.

Technical Advisor

MIT EECS / Media Lab Professor.

Leading professor from Multisensory Intelligence Group.

Robotic / LLM Expert

MIT & Harvard postdoc. PhD CS, Oxford

World-leading 4D vision & world-model researcher.

Leading engineers & advisors

30+ top-venue papers across AI, robotics, multimodal learning, vision, and embodied intelligence.

MANY BODIES

CyberBrain is designed as a brain framework that can support general-purpose assistants and special-purpose robots across different bodies and environments.

Home, retail, hotel, workshop, and everyday human-facing assistance.

Industrial, rescue, underwater, hazardous, and remote environments.

CyberBrain can extend from wheeled platforms to legged robots that move, interact, and assist in real-world spaces.

FAQ

A concise reference for the robotic brain, SLAK framework, learning loop, and asynchronous runtime.

CyberBrain is a reasoning-first robotic brain framework that lets robots understand people, reason about context, and act through physical bodies.

CyberBrain is primarily building the robotic brain framework and platform. Real robots demonstrate that the framework runs end-to-end on hardware.

It means the robot can respond to natural human instructions in real environments without a pre-scripted skill sequence for that exact task.

It stores context, task outcomes, reasoning traces, and useful strategies so future similar tasks can be handled more intelligently.

SLAK is the four-layer framework: Sensing, Logic, Action, and Knowledge.

It lets the body continue moving while the brain continues reasoning, making interaction smoother and more responsive.

Contact

For pilots, partnerships, developer kit interest, or early technical conversations, reach out to the CyberBrain team.